A Vaccination Simpson's Paradox

August 27, 2021 By Alan SalzbergI know that the Pfizer and Moderna vaccines are highly effective against Covid, including the delta variant, so I was surprised to see data out of Israel a couple weeks ago seemingly showing that the vaccines weren't helping. As I will explain, it turns out the figures are a case of "Simpson's Paradox," and in fact the vaccines are probably more effective against delta than even the 90% you may have read.

Before I explain what Simpson's Paradox is (for a different example see this old post: https://salthillstatistics.com/posts/2), let's consider the table below, which shows serious cases (mostly hospitalizations) by vaccination status as of August 7 in Israel.

As you can see from the table, there were 110 serious cases among unvaxed, meaning 3.3 out of every 100,000 unvaxed people had a serious case, and there were 211 serious cases among vaxed, meaning 4.2 out of every 100,000 vaxed had a serious case. If you naively look at this data, you see 4.2 vs 3.3, and you conclude that the vaxed are worse off.

Not so fast! The problem with the summary above is it ignores a very serious comorbidity: age. If everyone at every age were equally vaccinated, that would not matter. However, the very people most susceptible (older) are much more likely to be vaccinated. In Israel, more than 90% of the over 60 population is vaccinated, while about 50% of the under-60 population is. In order to fix this problem and view the population on a like-to-like basis, we can break the serious cases down by age and vaccination, which I do in the table below.

In this table, you see the same 3.3 serious cases per 100K unvaxed versus 4.2 vaxed overall, but you can also see that for both age groups, being vaxed is better (69.8 serious cases vs 14.9 in over 60s and 1.4 vs .4 in under 60). This is called Simpson's Paradox, where the result for each subcategory (vaccine works) appears different than the overall result (vaccine doesn't work). Whenever we see Simpson's paradox, we know that something is wrong. It can't be that the vaccine is better for every age group but worse overall.

The reason why the paradox occurs is because of weighting. In the overall result, unvaxed older people comprise less than 10% of the total unvaxed population, while vaxed older people comprised about 75% of the vaxed population. Because older people are up to 100s of times more likely to be seriously ill from Covid, age is even more important than a vaccine that reduces a disease, say, ten-fold. In essence, we are not doing a like-to-like comparison. Instead, by just looking at the overall, we are effectively looking at an older vaccinated person versus a younger unvaccinated individual.

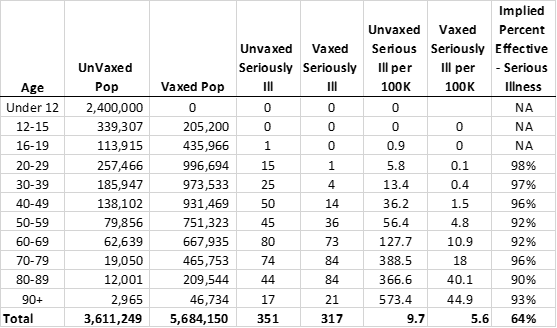

While the breakdown of 60+ vs under 60 shows the vaccine works, the effectiveness of 4.7 to 1 for over 60s and 3.5 to 1 for under 60s still seems lower than what I have read elsewhere. This is because even within the over 60s or the under 60s, the older, or more susceptible, are still more likely to get vaccinated. Therefore, even within the 60+ group, for example, our comparison is of an older vaccinated person (maybe 80 yrs old on average) and a younger unvaxed person (maybe 65 yrs old). How do I know this? Well, the vaccination rates get higher and higher, even for the over 60s. I went back to the Israel data dashboard today (find it here: https://www.gov.il/en/departments/guides/information-corona by clicking on the HE language option). I analyzed not just over and under 60, but vaccinations by 10 year age intervals. Here, I use more recent data (when there are more serious cases overall). For the table below, I added a percent effectiveness column, showing the percent effectiveness of the vaccine against serious infection, by age.

Now that we've broken it down by age, you can see that the vaccine is well above 90% effective against serious infection (some of the 60+ have now gotten a third shot, so those numbers may not be reflective of two-shot effectiveness). Some interesting things to note:

1. So few people get seriously ill under 20 that you cannot even measure effectiveness against serious illness. However, I looked at separate data for infections in the 12-19 age group and the vaccine appears to be 70-90% effective against any infection in that group.

2. The vaccine is more effective for the 20-50 age group than for older people. However, it is still 90% or more effective in 50+.

3. Simpson's paradox is still at work, showing a deceptive 64% overall effectiveness, even though the vaccine is 90% or more effective in every age category. This is the age-bias of vaccinations at work again.

So what's the take away? The vaccine is highly effective against serious illness in all age ranges (that matter). When you hear anecdotally about a lot of breakthrough illnesses that are serious, remember that there is a big bias because a much higher percentage of susceptible people are vaccinated. Only with both age and population adjustment can you get to a decent estimate of effectiveness.